If your counter value is always 1, then you are still running synchronously. Then run a thread that prints the counter every second. Each time your crawler finishes a request, decrement the counter. Each time your crawler starts a request increment the counter. To test this, create a global object and store a counter on it. You may have set the env variables correctly but your crawler is written in a way that is processing requests for urls synchronously instead of submitting them to a queue.

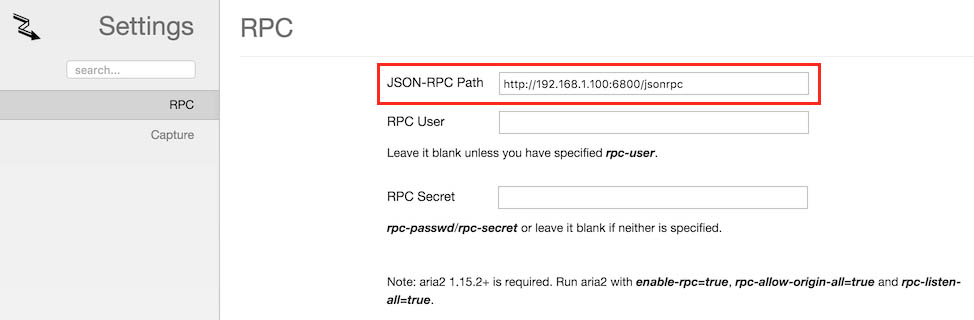

This may be because you are missing some setup or configuration that would make it so. Your crawler is not actually multi-threaded.I can't imagine there is much CPU work going on in the crawling process so i doubt it's a GIL issue.Įxcluding GIL as an option there are two possibilities here: It sounds like you've already tried this so this is probably not news to you but make sure you have configured the CONCURRENT_REQUESTS and REACTOR_THREADPOOL_MAXSIZE. With small file, you can easily download it by aria2c -x16 -s16 -j5 ' But you cant do that with large file because Google will show a warning about virus scan. To get multi-threaded behavior you need to configure your application differently or write code that makes it behave mulit-threaded. GitHub - 22digital/heroku-gdrive-buildpack: Remote Google Drive client on Heroku using Rclone, Aria2, WinRAR and Git LFS. It looks like Scrapy Crawlers themselves are single-threaded. Remote Google Drive client on Heroku using Rclone, Aria2, WinRAR and Git LFS. What is your favorite way to download files from the Internet? If instead you want to save a file to multiple folders, here is a trick for you to do so.Taking a stab at an answer here with no experience of the libraries. You can use those switches together and even mix different sources (like HTTP & BitTorrent) in a single file_list.txt you use as an input. If you are looking for a user interface for this command line tool, you should check out Persepolis, which is a GUI for Aria2 Typical web servers usually allow up to eight connections in parallel, but you may find that some file servers restrict you even down to a single connection. It's worth noting, though, that many file hosts put limits on the connections allowed since they drain their resources. Not to be confused with the -j switch, this splits a file in multiple chunks and downloads them through parallel connections to maximize the download speed. -x: Number of parallel connections for each download.Useful to, for example, turn back "21820198465.mp4" to "our_vacation_video.mp4," without having to rename the file manually after the download completes. -o: Allows you to define an output name for the downloaded file.When one of them completes, it will move to the next one on the list. If, for example, you use an input file containing 20 URLs with the above switch and use -j 3, aria 2 will start downloading three of those files in parallel.

-j: Followed by a number, and used in conjunction with an option like the previous one, it defines how many files aria 2 can download in parallel.-i: Use a TXT file with a list of URLs as the source - useful for downloading multiple files in one go.-c: Don't re-download file if it already exists.Aria2 comes with some useful switches that allow you to optimize the download process:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed